We all know that our future cars will have many cameras on board to make our rides safer and more comfortable. But what functions will these cameras perform and where do they go?

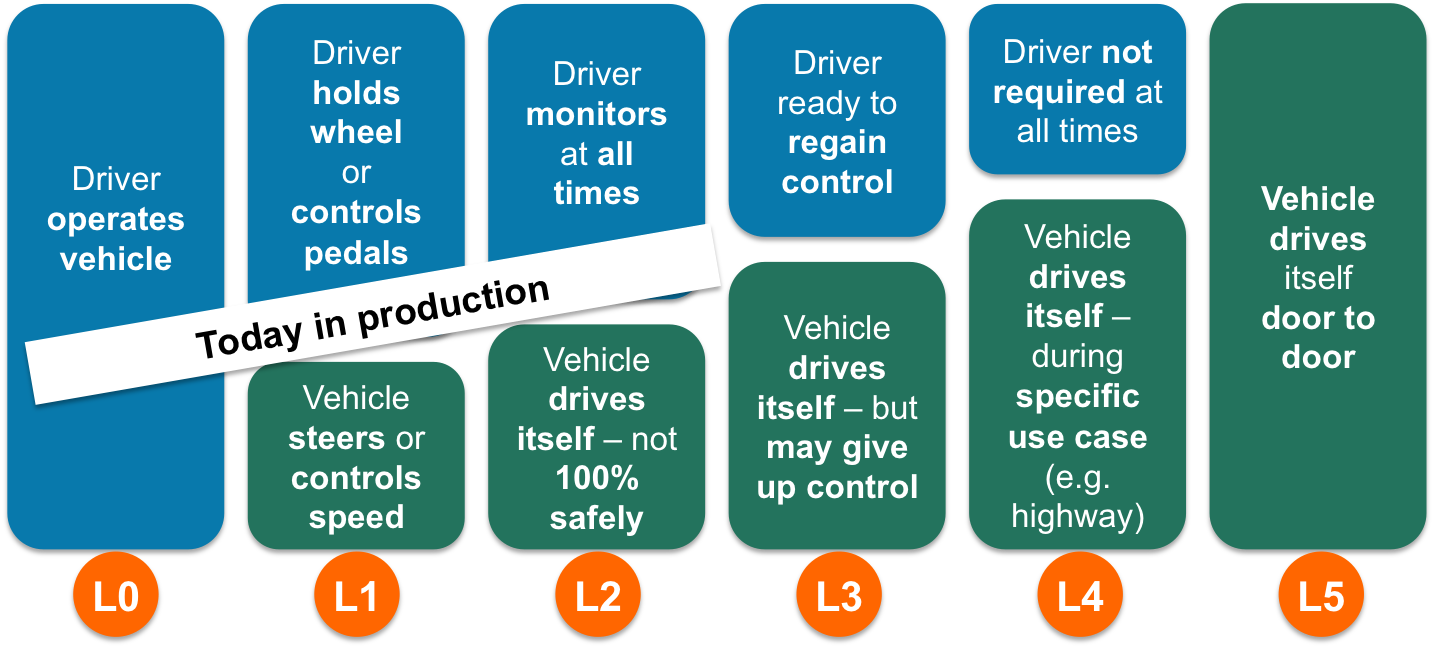

We’ve spoken about SAE’s 6 levels of automation before. The levels are primarily defined by who has control over the steering wheel and pedals and under which circumstances. At Level 0 the vehicle is solely operated by the driver, while at level 5 the car is completely driven by electronics. These 6 levels provide a nice framework for looking at what the cameras will be used for.

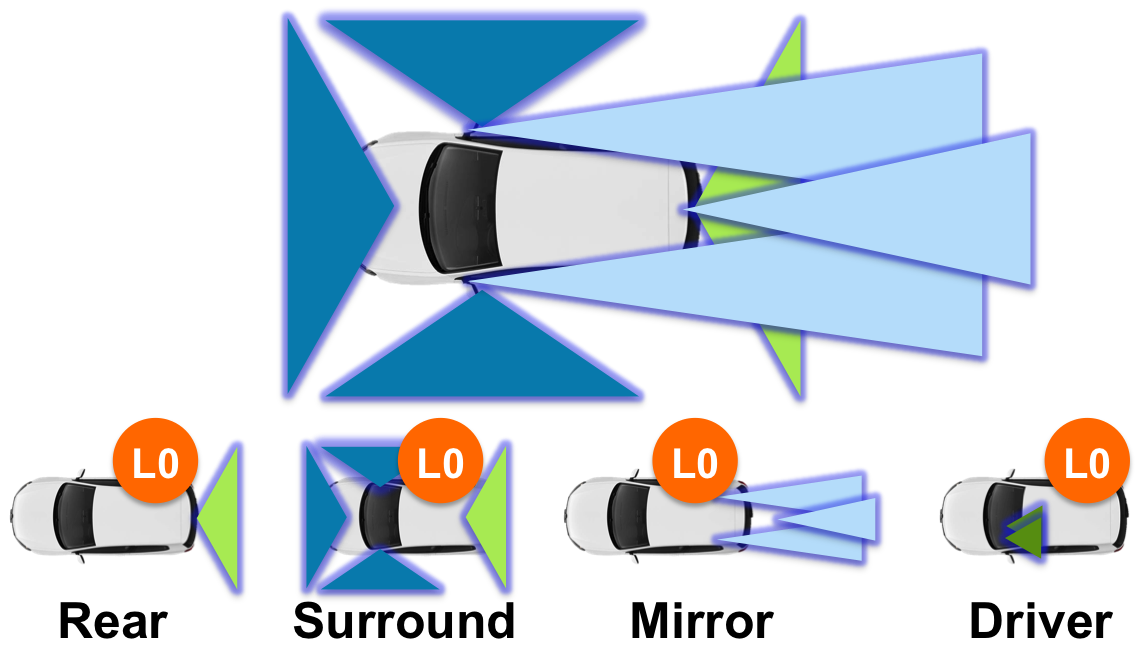

At level L0 the driver is operating the vehicle 100%, so it’s primarily about about extending visibility, making it easier for the driver to see and understand what’s going on around the car. Even though this may be relatively simple, we see quite a few opportunities at L0 in the market. In this scenario there’s a rear camera, soon becoming mandatory on new vehicles in the US, or a more elaborate system with 4 surround view cameras that present a full 360 view around the car. In addition, cameras can replace our rear-view mirrors. This adds another 2-3 cameras since these mirror-replacing cameras need different fields of view than the surround view cameras. We’re now up to 7 cameras just to extend visibility. We’re not even considering night-vision cameras here, which are already in production to enhance forward-looking visibility at night. Another L0 driver assistance system is driver monitoring. Here the cameras are located inside the vehicle, keeping an eye on the operator, to ensure she’s alert and not distracted. Most driver monitoring systems have multiple cameras to ensure their view of the driver is never blocked. Of course, for insurance purposes, all of these cameras may be used to record and store video also.

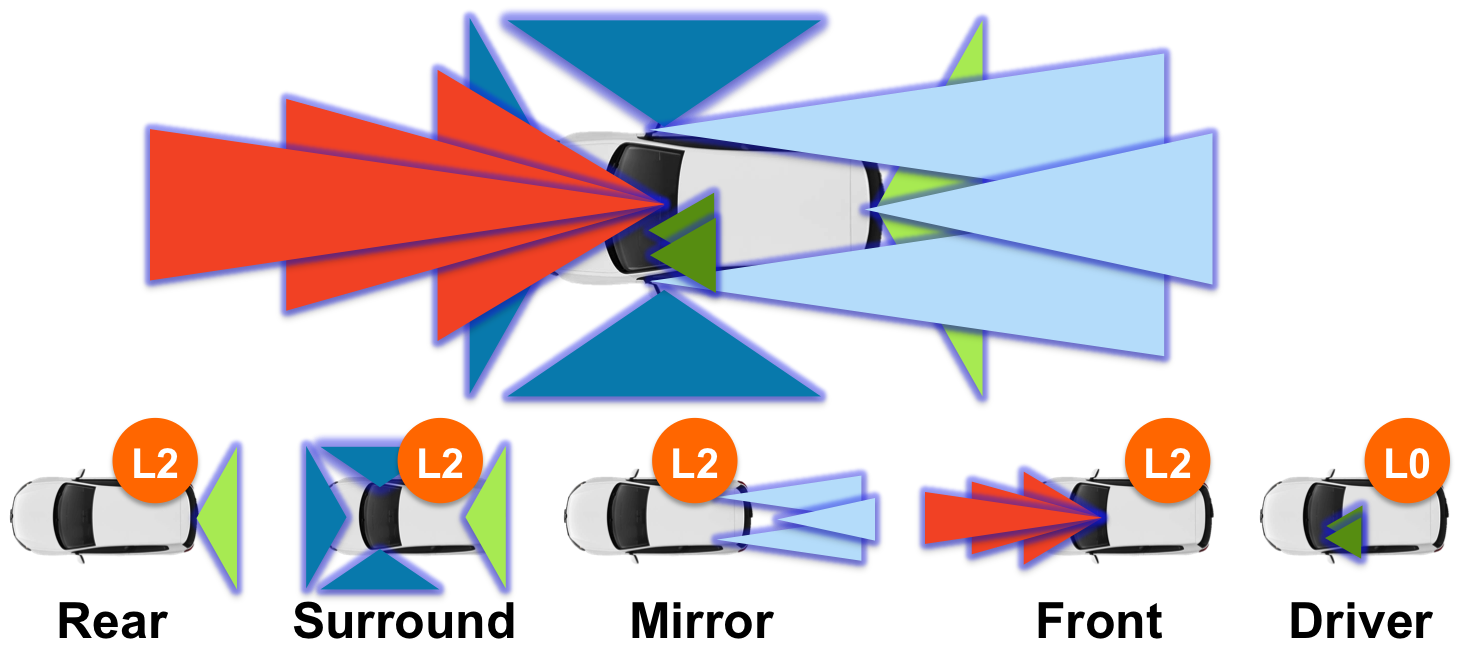

Any functionality beyond L0 requires computer vision techniques to process the captured images and extract meaningful data that can be used to control the vehicle. Many of the above mentioned cameras will include such functionality in the near future. For example, rear-view cameras can also be used for parking assist, backover protection or to detect crossing traffic behind the car. Mirror replacement cameras can help with rear collision warning systems or blind spot monitoring. Surround view cameras can help with lane keeping and lane changing. The key cameras today on systems that support L2, where the driver can let go of the wheel and pedals, are front facing cameras. These typically have a narrower field of view than the forward-looking surround view camera. The narrower field of view increases resolution to recognize objects further in the distance. The images that these front-looking systems capture are typically not displayed for the driver, but purely used as sensing devices to understand where the car can safely go. The upcoming Euro NCAP safety rating requires such a forward facing camera in order to detect lanes, pedestrians, and vehicles. In addition, traffic signs must be recognized and the vehicle’s headlights controlled in order not to blind upcoming cars. Forward looking camera system usually include multiple cameras, each with different fields of view and ranges.

In total, we’re up to about 12 cameras then. Of course, if many of these cameras require the use of stereo in order to capture depth information, then this number will even double. Instead of stereo, we’ve primarily been using Structure from Motion at videantis, which captures depth information from a standard single camera instead. There’s an article on at the inVision magazine with more information and we demonstrated this structure from motion technology running on our processor architecture at the Embedded Vision Summit earlier this year.

Did I miss any cameras? Let me know and I will add them in a follow-on article.