In 2016 we wrote an article titled “what are all these automotive cameras doing?” about how and where we saw automotive cameras being integrated into consumer vehicles. These cameras are combined with intelligent visual processing primarily to enhance safety and to relieve the driver from fully operating the vehicle. At the time, we counted 12 cameras in the vehicle: 3 in a front camera, 4 for surround view and parking cameras, 3 cameras for mirror-replacement, and 2 cameras to monitor the driver.

The latest Tesla vehicles actually come pretty close. A new Tesla today has 9 cameras on board: 3 front cameras with different fields of view, 4 on the sides, 1 on the rear, and then there’s a new in-cabin camera just above the rear-view mirror. The in-cabin camera recently got added but is reportedly not in use yet.

Giving a car about 10 eyes seems like it should be enough. After all, humans can safely operate a vehicle with just two eyes. And even if you only have one functioning eye, you can still legally operate a vehicle in most countries without needing extra measures.

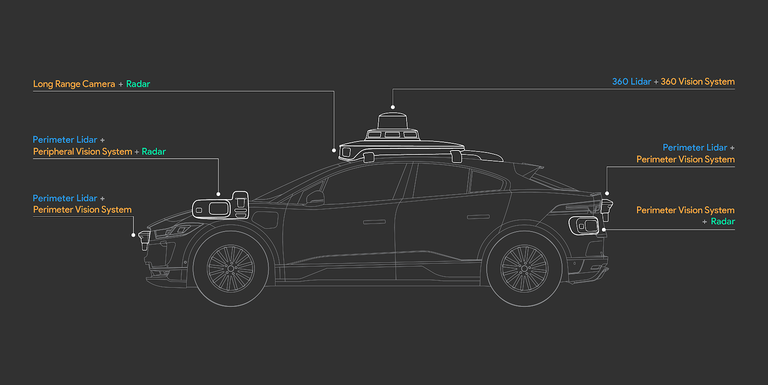

But recently Waymo announced that their latest vehicles have no less than 29 cameras on board. This is almost 3x the number of cameras that we had predicted would be enough just 4 years ago! And in addition to the 29 cameras, there are 5 lidars on board, one for long range and another 4 for closer proximity sensing, as well as 6 radars. That’s over 40 powerful sensors that Waymo’s designers saw were required to make a fully autonomous vehicle sense its surroundings with enough detail and precision to safely and swiftly guide the car to its destination. The cameras have different fields of view, some focusing on more near sensing, while others can spot pedestrians and stop signs even 500 meters away. Waymo published a video and an article where they give a bit more background, but not many details are available.

Cost also does not seem to be a top priority for Waymo. The average price of a passenger vehicle around the world is $27K. This doesn’t leave much room for 40 sensors and a trunk half full of AI compute electronics, which is what the AI-based computer vision algorithms that fuse and process all this data need.

Waymo has been working on self-driving vehicles for well over a decade; they know what they’re doing. But will every vehicle have 40 sensors on board in a few years?

What we see at videantis is that the automotive industry is adopting intelligent cameras, radar and lidar at an impressive pace. But there’s a wide range of requirements. Some vehicles indeed adopt more than 10 cameras, but front cameras, surround view, rear, mirror-replacement, and driver monitoring are still the key applications for the mainstream vehicle segment. One thing is clear: whether you have 40 sensors, 10, or just a single one in the vehicle, you need a very efficient high-performance AI and vision processor architecture that can handle the wide variety of processing requirements. Whether it’s AI, computer vision, imaging, or data fusion, the unified videantis processor architecture can run it. And just as important, the architecture is scalable and software programmable, resulting in maximum reuse of software and semiconductor technology to support the OEM’s vehicle line up, whether they are fully autonomous or include just a couple of cameras for safety, and whether it’s for this year’s vehicles or for those in ten years.