New deep learning product launched

The v-MP6000UDX processor scales from typically 8 to up to 256 cores with 64 MACs per core. This results in industry-leading performance, scaling to 25 TMAC/s and 16384 MACs per cycle, while remaining very low power and bandwidth efficient.

CES demo video

We’re the only company that runs all these tasks on a single unified processing architecture. This simplifies SOC design and integration, eases software design, reduces unused dark silicon, and provides additional flexibility to address a wide variety of use cases.

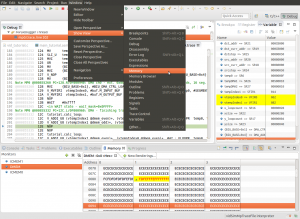

New SDK release 2017.3.1

For a complete overview of the changes please see the release notes document VDC1990 version 1.6 in your distribution.

Are you integrating SOCs that have videantis processors inside? Contact us to learn more about how we can help you reach highest performance and get to market fastest.

Industry news

Google turns Chrysler minivans into autonomous vehicles

Waymo is moving from R&D to true deployment and operations of its self-driving vehicles. Two months after the Alphabet self-driving car spinoff announced it would start running a truly driver-free service in Phoenix this year (as in, cars romping about with no one at the wheel), the company now unveils how it will do it: with the help of thousands more Chrysler Pacifica hybrids.

Read more

TED talk from Yolo’s creator

Joseph Redmon works on the YOLO (You Only Look Once) system, an open-source method of object detection that can identify objects in images and video — from zebras to stop signs — with lightning-quick speed. In a remarkable live demo, Redmon shows off this important step forward for applications like self-driving cars, robotics and even cancer detection.

Watch video

Upcoming events

| Mobile World Congress | 26 Feb – 1 March, 2018, Barcelona, Spain | See us at the largest mobile event of the year. |

| Embedded Vision Summit | 22 – 24 May, 2018, Santa Clara, California | The premier event for product creators who want to bring visual intelligence to product. |

Schedule a meeting with us by sending an email to sales@videantis.com. We’re always interested in discussing your video, vision, and deep learning SOC design ideas and challenges. We look forward to talking with you!

Was this newsletter forwarded to you and you’d like to subscribe? Click here.